FOD#47: AI Goes Mainstream: Celebrity Models, Beer-Brewing Algorithms, and the Future We Live In

get a list of interviews that are must to see to understand the future + Jamba, DBRX, and the freshest AI news and research papers

Where has it been seen that the launch of an open-source model would be covered in the press in the style of a reportage? But here we are: WIRED covering the launch of DBRX, a new open-sourced model from Databricks.

This level of transparency and public relations is something new in the AI world. Is it a clever marketing move, or are algorithms truly becoming the hottest stars in town? Jensen Huang, at the opening of GTC, ‘reminded’ attendees, “I hope you realize it’s not a concert but a developer conference. There will be a lot of science, algorithms, computer architecture, mathematics.” A prepared joke that highlights that everything about GTC and Jensen Huang himself was set as a rock concert. In this light, it’s not surprising that Demis Hassabis, Google DeepMind CEO, has just been knighted. And that every US federal agency must now hire a chief AI officer. Each day, AI keeps making headlines. But considering the amount of science stuffed into this pie, it’s a type of celebrity I can totally become a fan of.

Not that long ago, Yann LeCun, Meta’s Chief AI Scientist, found his 100-meter portrait displayed on the Burj Khalifa during the World Government Summit in Dubai. When I have a chance to interview him, I’ll ask if he could have imagined, in 1989, demonstrating the practical application of backpropagation at Bell Labs, that he — a nerd — would become such a star.

In fantastic times, we live. With AI permeating every aspect of our lives.

But leave all this Dubai exaggeration aside: you know AI is really getting serious when Belgian brewmasters leverage it to enhance beer flavors. They just used ML to analyze 250 Belgian beers for chemical composition and flavor attributes, to predict taste profiles and appreciation. Cheers to that!

Today, we won’t offer you any architecture explanations, instead we encourage you to dedicate some time to these videos, which offer a glimpse into the future we’re already living in. I plan to watch these with my kids. If they switch from wanting to be YouTubers to becoming ‘hot’ AI scientists, I’ll be fully supportive.

For an evening with family

2 hours of a blockbuster with Jensen Huang and his Nvidia GTC Keynote

A school project: A little guide to building Large Language Models in 2024 by Tom Wolf from Hugging Face

For an evening with friends (who are also interested in investing)

AI Ascent 2024 by Sequoia, including:

The AI opportunity: Sequoia Capital’s AI Ascent 2024 opening remarks

What’s next for AI agentic workflows — Andrew Ng, AI Fund

Trust, reliability, and safety in AI — Daniela Amodei, Anthropic and Sonya Huang

Making AI accessible — Andrej Karpathy and Stephanie Zhan

Open sourcing the AI ecosystem — Arthur Mensch, Mistral AI and Matt Miller

What’s next for photonics-powered data centers and AI — Nick Harris, Lightmatter

What’s next for AI agents — Harrison Chase, LangChain

For a car ride

Sam Altman: OpenAI, GPT-5, Sora, Board Saga, Elon Musk, Ilya, Power & AGI | Lex Fridman Podcast #419

If you still have three hours left

Sholto Douglas (DeepMind) & Trenton Bricken (Anthropic) — How to Build & Understand GPT-7’s Mind

And if you want someone grumpy about AI, you can attend to these two posts by Gary Marcus (about GenAI bubble and the race between positive and negative), who also thinks he is an AI celebrity.

Hottest Releases of the Week (pam-pam-pam!):

Databricks with Mosaic’s DBRX

DBRX is a state-of-the-art open LLM by Databricks, outperforming GPT-3.5 and rivaling Gemini 1.0 Pro, especially in coding tasks. Its fine-grained Mixture-of-experts (MoE) architecture enhances efficiency, offering 2x faster inference than LLaMA2–70B with significant size reduction. DBRX excels across various benchmarks due to its training on a curated 12T token dataset. It’s available on Hugging Face, integrating seamlessly into Databricks’ GenAI products, marking a leap in open-source LLM development.

The former CEO of Mosaic, now a Databricks VP, commented on the outstandingly low $10 million spent on training DBRX.

→Read one of our most famous profiles: Databricks: the Future of Generative AI in the Enterprise Arena

JAMBA news

Just last week, we discussed the mamba architecture that rivals the famous transformer. This week, the news is even more impressive: AI21 introduced a mix of the two: Jamba, AI21’s pioneering SSM-Transformer model, merges Mamba SSM technology with the Transformer architecture, offering a substantial 256K context window. It outperforms or matches leading models in efficiency and throughput, achieving 3x throughput on long contexts. Unique for fitting 140K context on a single 80GB GPU, Jamba democratizes AI with its open weights and hybrid architecture. Here you can read the paper.

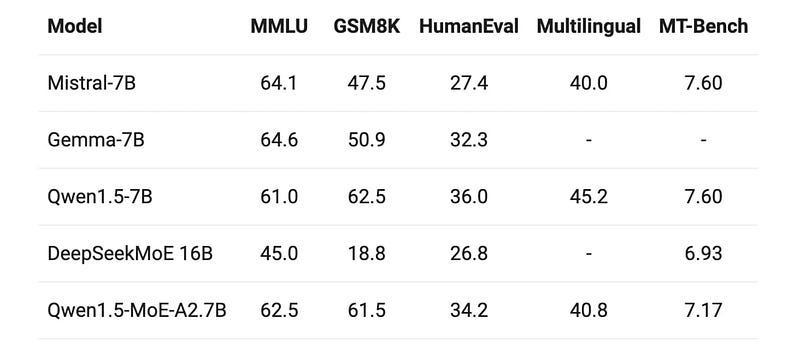

Other impressive releases (both on March 28):

(though there are discussions about how trustworthy current benchmarks are)

News from The Usual Suspects ©

Microsoft’s New Azure AI Tools

Announced tools enhance generative AI app security: Prompt Shields for injection attacks, Groundedness detection, Safety templates, Evaluations for risks, and Monitoring. Aims to secure AI goals against risks.

OpenAI’s Voice Engine

Introduces a model for generating natural speech from text and audio samples, cautiously previewing to prevent misuse. Targets diverse applications, ensuring safety with consent and watermarking for traceability.

Chips — “DeepEyes”

Chinese company Intellifusion launches a cost-effective AI processor, 90% cheaper than GPUs, sidestepping U.S. sanctions. Aims for wide AI market impact, highlighting China’s push for affordable, domestic AI technology.

Last week, a few exciting research papers were published. We categorize them for your convenience 👇🏼

🤍 Thank you for reading. Please also send this newsletter to your colleagues if it can help them enhance their understanding of AI and stay ahead of the curve.